Understanding Accepted AI Lines vs. All Merged Lines in AI Adoption Report

Last updated: January 29, 2026

Overview

The "Accepted AI Lines vs. All Merged Lines (Organization)" metric helps you understand the impact of AI coding assistants on your engineering organization by comparing AI-generated code that makes it into your codebase against total code output.

This report represents directional changes in the organization: how much code are we generating vs how much code are we merging?

The Two Components

1. Accepted AI Lines

What it measures: The total volume of AI-generated code that developers accepted and merged into your codebase.

Calculation includes:

Lines of code added from AI suggestions that were accepted

Lines of code deleted from AI suggestions that were accepted

AI suggestion tabs accepted (converted to an equivalent line count)

Filters applied:

Only counts active contributors

Excludes non-employees and non-contributor roles

2. All Merged Lines (Organization)

What it measures: The total volume of code changes merged across your entire organization during the same time period.

Calculation includes:

All lines of code added

All lines of code removed

All lines of code modified

Filters applied:

Only merged pull requests

Only active developer contributors

Excludes generated code files, vendor directories, and other specified patterns

Notes on Filters:

This metric is not filterable by People or Team; this chart always represents all accepted lines and all merged lines regardless of what People / Teams you have selected at the top of the page.

Tool filters:

The tool filter WILL be respected in the Accepted vs. Merged LOC chart.

If you filter by tool (with the exception of Copilot, who does not send Span the granular data) we show an additional column in the grid view at the bottom of the report for accepted AI vs. All merged lines to show this metric at the person/team level.

The Combined Metric: What It Tells You

When displayed together, these metrics calculate a percentage ratio that shows what proportion of your organization's merged code originated from AI-assisted development.

Formula: (Accepted AI Lines ÷ All Merged Lines) × 100 = AI Adoption %

Key Insights You Can Gain

1. AI Adoption Scale

See the absolute volume of AI-assisted code versus total engineering output

Understand actual AI tool usage in concrete terms

2. AI Contribution Percentage

Identify what percentage of your codebase development is AI-assisted

Measure the intensity of AI tool adoption across your organization

3. Adoption Trends Over Time

Accelerating: AI lines growing faster than total lines

Proportional growth: Both metrics rising at similar rates

Stalling: AI lines plateau while total development continues

Declining: AI contribution percentage decreasing

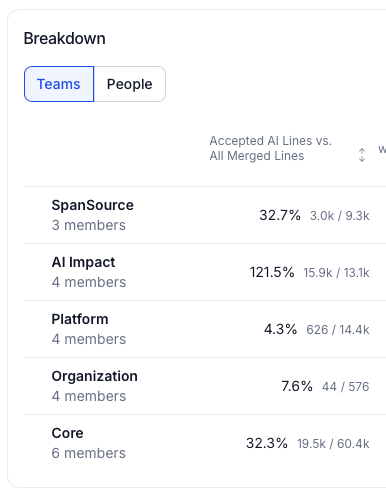

4. Team Comparison

Compare adoption rates across different teams

Identify high-performing AI adopters and teams that may need support

5. Business Context

Correlate with other metrics like delivery velocity, PR cycle time, and code quality

Understand whether AI adoption corresponds to productivity improvements

Where to Find This Metric

Navigation:

Go to Insights → AI Transformation → Adoption

Select a specific AI tool (GitHub Copilot, etc.) or view all tools

Scroll to the ADOPTION section

Find the "Accepted AI Lines vs. All Merged Lines (Organization)" card

Visualization:

Two-series bar chart showing AI Lines Accepted (green) and Lines Merged (gray) over time

Ratio trend line showing the percentage over your selected time period

Summary statistics including overall ratio and AI line estimates

Available filters:

Date range

Team

Individual developer

IC level

Specific developer tool

Important Considerations

Interpretation Note: The reported percentage may be slightly inflated because developers can use AI tools throughout the development process (including in branches that get squashed or rebased). This means the metric captures AI-assisted code acceptance rather than strict final attribution.

Best Practices:

Review trends over time rather than focusing on single data points

Compare against baseline periods to measure adoption growth

Analyze alongside productivity metrics to understand impact

Use team-level breakdowns to identify adoption patterns and opportunities

Related Metrics

This metric works alongside other AI adoption indicators:

Active Users: Count of developers using AI tools

AI Days/Week: Frequency of AI tool usage per developer

Weekly/Monthly Active User Ratios: Percentage of team actively using AI tools

Together, these metrics provide a complete picture of AI coding assistant adoption and impact in your organization.