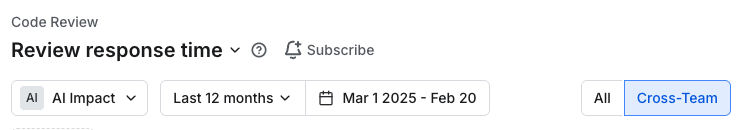

Using the Cross Team toggle in the Review Response Time Report

Last updated: February 20, 2026

Review Response Time measures how quickly developers provide their first review on a pull request. It tracks the time from when a review is requested (or when a PR becomes ready for review) until the first review is submitted.

This metric helps engineering leaders identify bottlenecks in the code review process, balance reviewer workload, and ensure PRs aren't sitting idle waiting for feedback.

How It's Calculated

What's measured: The time to the first review response per reviewer — subsequent reviews on the same PR are not counted again.

Unit: Hours

Two scenarios:

When a review is explicitly requested: Time from the review request to the first review completion.

When a review happens without an explicit request: Time from when the PR was opened for review (i.e., moved out of draft) to the first review.

Outlier handling: Review times exceeding 30 days are capped at 30 days to prevent extreme outliers from distorting averages.

Who's included: Only dev contributors are counted — non-developer stakeholders are excluded.

What the "Cross Team" Toggle Does

It filters the results to show only cross-team reviews, rather than all reviews. A review is considered "cross-team" when the reviewer is from a different team than the PR author (i.e., they don't share a hierarchical team relationship — no ancestor/descendant team overlap either).

Is It Bidirectional When Grouping by Person?

No — it's unidirectional. When you group by person with the cross-team toggle ON, you're seeing:

How quickly that person (as a reviewer) responded to PRs authored by someone on a different team.

It does not flip to also capture reviews received by that person from outside their team. The cross-team condition is always evaluated as: "Is the reviewer's team different from the PR author's team?" — and when grouped by person, that person is the reviewer.

Summary

Grouping by Person | Cross-Team Behavior |

The "person" = reviewer | Filters to reviews they gave where the PR author was on a different team |

Direction | One-way (reviewer → author team comparison) |

So if you're trying to measure both directions (e.g., how quickly Alice responds to cross-team PRs and how quickly cross-team reviewers respond to Alice's PRs), the single toggle won't cover both — you'd need to analyze from each perspective separately.

Interpreting the data

Identifying Cross-Team Review Bottlenecks

To understand which teams are slowing down other teams in the review process:

Enable the Cross-Team toggle to filter to only cross-team reviews

Set Group by → Team to segment by reviewer team

This surfaces which teams are slowest at responding to review requests from outside their own team. Teams with consistently high response times in this view are potential bottlenecks for the broader organization.

Note: This view always reflects the reviewer's team perspective. To understand which teams' PRs are sitting idle the longest waiting for external review, filter the report to a specific team's authored PRs and repeat the analysis.